As businesses increasingly adopt large language models (LLMs) to streamline operations, enhance customer experiences, and generate content, the demands on AI infrastructure are growing. LLMs, such as GPT-4 and other cutting-edge models, are revolutionizing industries by providing more accurate and dynamic capabilities in natural language processing. However, as powerful as these models are, they come with significant operational challenges, particularly in how they are monitored and maintained. LLM models require advanced monitoring and observability tools, like Traceloop’s OpenLLMetry, to effectively manage and publish observability data across various frameworks.

Model evaluation plays a crucial role in monitoring and maintaining LLMs, ensuring that they perform as expected and meet the desired standards.

Traditional AI monitoring approaches often fall short when applied to LLMs due to their immense size, complexity, and continuous learning processes. These models operate at such a scale that even minor inefficiencies can cause significant disruptions. The need for specialized monitoring solutions that track LLM behavior in real time has become a priority for enterprises looking to stay competitive in this AI-driven world. Effective monitoring ensures that LLMs perform reliably, minimize biases, and operate efficiently, all while staying aligned with business goals.

As we delve deeper into the operational intricacies of LLMs, it becomes clear that a new approach to monitoring is required. Whether managing real-time user interactions or optimizing back-end processing, the role of LLM monitoring is more critical than ever. This article explores why monitoring LLMs is more challenging than traditional models, the tools and strategies available, and how businesses can stay ahead in this rapidly evolving space.

The goal of this article is to provide businesses and AI professionals with a comprehensive understanding of why LLM monitoring has become increasingly important and how they can implement effective strategies. By offering insights into key challenges, tools, and best practices, this guide aims to equip organizations with the knowledge needed to ensure their LLMs operate efficiently and continue to drive innovation.

LLM Monitoring and Observability: Key Differences

While LLM monitoring and observability are related concepts, they are not the same thing. Understanding the distinction between the two is essential for effective LLM management.

Understanding the Distinction Between Monitoring and Observability

LLM monitoring involves tracking LLM application performance through evaluation metrics and methods. This includes monitoring metrics such as model accuracy, latency, throughput, and performance metrics. Monitoring provides a snapshot of the system’s performance, allowing developers to identify and address issues as they arise.

LLM observability, on the other hand, is the process that makes monitoring possible by providing full visibility and tracing in an LLM application system. Observability involves tracking the application, prompt, and response to understand how the system is performing. It goes beyond simple metrics to offer a comprehensive view of the system’s behavior, enabling developers to understand the underlying causes of performance issues.

In summary, LLM monitoring is focused on tracking metrics, while LLM observability is focused on understanding the system’s behavior. Both are essential for effective LLM management, and they work together to provide a comprehensive view of the system’s performance. By combining monitoring and observability, businesses can ensure that their LLM applications operate smoothly, efficiently, and securely.

Key Metrics and KPIs for LLM Monitoring

Effective LLM monitoring hinges on tracking several critical metrics and KPIs that provide insights into the model’s performance and operational health. These metrics help organizations identify areas for improvement and ensure that their LLM applications are running optimally.

-

Model Accuracy: This metric measures the percentage of correct responses generated by the LLM. High model accuracy is crucial for ensuring that the outputs are reliable and meet the intended use cases. Regularly tracking accuracy helps in identifying any degradation in performance, which could be due to model drift or changes in input data.

-

Model Latency: Latency measures the time taken by the LLM to generate a response. Low latency is essential for applications requiring real-time interactions, such as customer service chatbots. Monitoring latency helps in optimizing the model and infrastructure to ensure quick response times.

-

Model Throughput: Throughput measures the number of requests processed by the LLM within a given time frame. High throughput indicates that the model can handle a large volume of requests efficiently, which is vital for scalability and performance under load.

-

Data Quality: The quality of input data fed to the LLM significantly impacts its performance. Monitoring data quality involves checking for inconsistencies, biases, and relevance of the data. High-quality data ensures that the model’s outputs are accurate and reliable.

-

Model Drift: Model drift measures the change in the LLM’s performance over time due to changes in the input data or other factors. Detecting model drift early allows for timely retraining or adjustments to maintain performance levels.

-

Response Time: This metric measures the time taken by the LLM to generate a response. Monitoring response time is crucial for applications that require quick interactions, ensuring that the model delivers timely and efficient outputs.

-

Resource Utilization: This metric measures the amount of system resources (e.g., CPU, memory, disk space) used by the LLM. Efficient resource utilization is crucial for cost management and ensuring that the model runs smoothly without overloading the infrastructure.

-

User Feedback: User feedback measures the satisfaction of users with the LLM’s responses. Collecting and analyzing feedback helps in understanding the model’s real-world performance and identifying areas for improvement.

-

Sensitive Data Handling: This metric measures the LLM’s ability to handle sensitive data, such as personally identifiable information (PII). Ensuring that the model processes sensitive data securely and in compliance with regulations is critical for maintaining user trust and avoiding legal issues.

By diligently tracking these metrics and KPIs, organizations can gain comprehensive insights into the performance of their LLM applications, enabling them to make informed decisions and continuous improvements.

Evaluation Metrics for LLM Performance

Evaluating the performance of Large Language Models (LLMs) is crucial for ensuring their reliability and effectiveness in various applications. Effective LLM monitoring and observability rely on a set of evaluation metrics that provide insights into the model’s performance, accuracy, and responsiveness. Here are some key evaluation metrics for LLM performance:

-

Model Accuracy: This metric measures the model’s ability to generate accurate responses to user input. It can be evaluated using precision, recall, and F1-score. High model accuracy is essential for ensuring that the outputs are reliable and meet the intended use cases.

-

Perplexity: Perplexity measures the model’s ability to predict the next word in a sequence. Lower perplexity scores indicate better performance, as the model is more confident in its predictions. This metric is particularly useful for evaluating language models in tasks like text generation and completion.

-

Data Drift: Data drift refers to changes in the distribution of input data over time. This can significantly affect the model’s performance and accuracy. Monitoring data drift helps in identifying when the input data has deviated from the training data, allowing for timely retraining or adjustments.

-

Model Drift: Model drift measures the change in the model’s performance over time. This can be caused by changes in the input data or the model’s parameters. Detecting model drift early is crucial for maintaining consistent performance and avoiding degradation in output quality.

-

Response Time: Response time measures the time taken by the model to generate a response to user input. Faster response times are critical for applications requiring real-time interactions, such as customer service chatbots. Monitoring response time helps in optimizing the model and infrastructure to ensure quick and efficient responses.

-

Resource Utilization: This metric measures the amount of computational resources used by the model, including CPU, memory, and GPU usage. Efficient resource utilization is crucial for cost management and ensuring that the model runs smoothly without overloading the infrastructure.

-

User Feedback: User feedback measures the satisfaction of users with the model’s responses. Collecting and analyzing feedback helps in understanding the model’s real-world performance and identifying areas for improvement. Metrics such as user ratings and feedback forms can provide valuable insights.

-

Sensitive Data Handling: This metric measures the model’s ability to handle sensitive data, such as personally identifiable information (PII). Ensuring that the model processes sensitive data securely and in compliance with regulations is critical for maintaining user trust and avoiding legal issues.

By tracking these evaluation metrics, developers can identify areas for improvement and optimize their LLM applications for better performance, accuracy, and user satisfaction. Effective LLM monitoring and observability are essential for ensuring the reliability and effectiveness of LLM applications in various industries.

In addition to these metrics, it’s also important to consider the following best practices for LLM monitoring and observability:

-

Use a Combination of Metrics: Employ a combination of metrics to get a comprehensive view of the model’s performance. This holistic approach ensures that no critical aspect is overlooked.

-

Monitor Data Drift and Model Drift: Regularly monitor data drift and model drift to ensure that the model’s performance is not affected by changes in the input data or the model’s parameters. Early detection allows for timely interventions.

-

Leverage User Feedback: Actively use user feedback to evaluate the model’s performance and identify areas for improvement. User insights are invaluable for refining model outputs and enhancing user experience.

-

Ensure Secure Handling of Sensitive Data: Implement robust measures to ensure that the model handles sensitive data securely and in compliance with regulations. This includes encryption, access control, and anonymization techniques.

-

Utilize LLM Observability Tools: Employ LLM observability tools to gain deep insights into the model’s performance and identify areas for improvement. These tools provide comprehensive visibility and tracing capabilities, essential for effective LLM management.

By following these best practices and tracking the evaluation metrics mentioned above, developers can ensure that their LLM applications are reliable, effective, and secure.

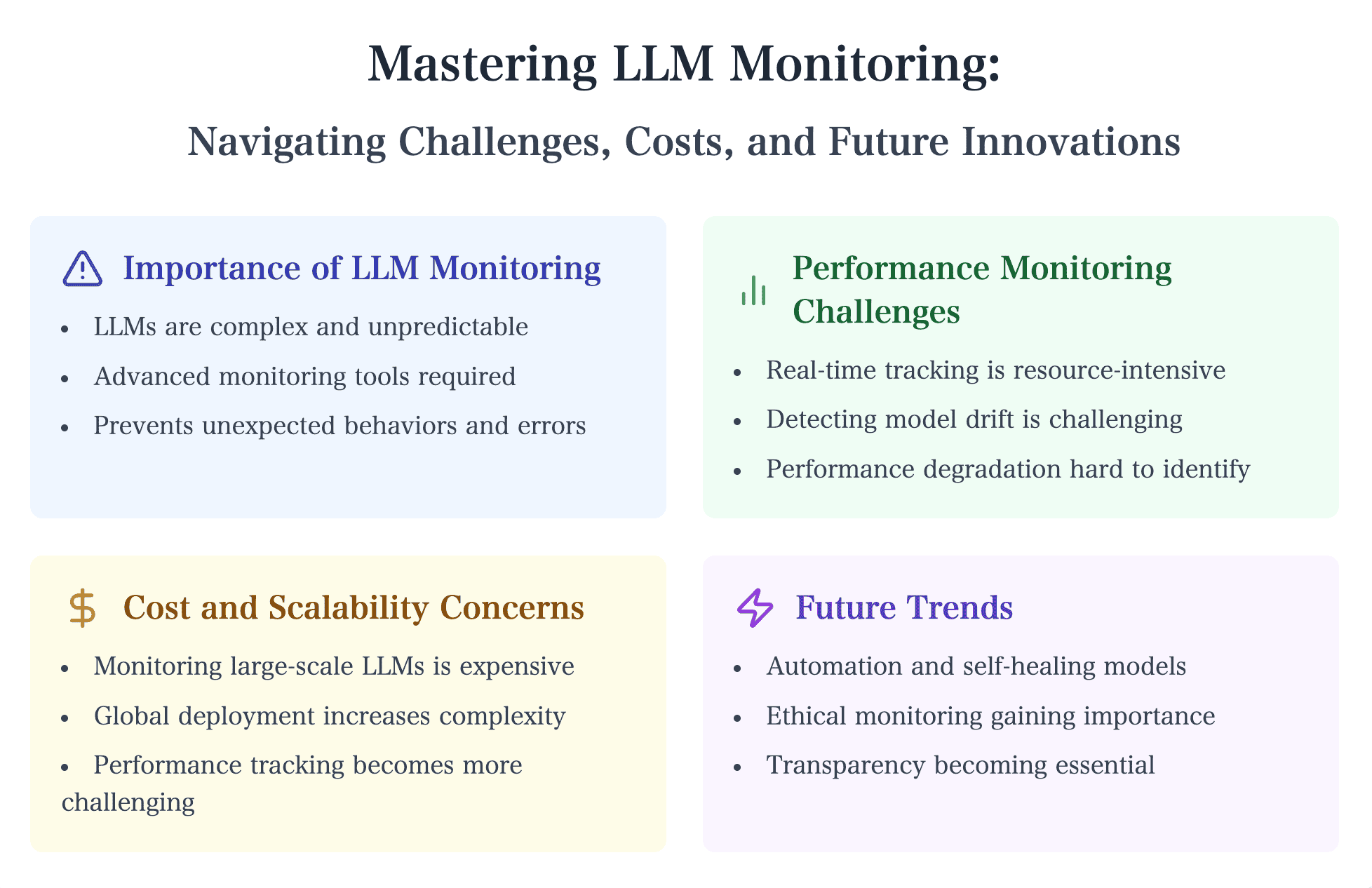

Key Challenges in Monitoring Large Language Models

Monitoring large language models (LLMs) presents a host of challenges that differ from traditional AI models. Given the sheer scale and complexity of LLMs, tracking their performance and behavior in real-time requires sophisticated tools and strategies. This section explores four key challenges businesses face when implementing monitoring systems for LLMs: performance, behavioral issues, scalability, and cost efficiency. LLM observability tools are crucial for maintaining and optimizing the functionality and reliability of LLMs in production settings.

1. Performance Monitoring

The dynamic environments in which LLMs operate require constant performance monitoring to ensure smooth functionality. LLMs, such as those used for real-time customer interactions or content generation, need to maintain low latency, inference latency, and high throughput. Monitoring systems must track these metrics to ensure response times are efficient and model output is delivered without delays. However, the challenge lies in the vast computational resources LLMs require. Latency issues may arise as models are deployed across various platforms, from cloud to on-premise servers, and it becomes difficult to optimize performance across these diverse environments.

Moreover, resource utilization is a critical aspect of performance monitoring. LLMs can consume significant GPU and memory resources, especially during inference. Monitoring tools must be equipped to track these resources and provide real-time feedback to prevent bottlenecks that could slow down business operations. Performance monitoring also includes understanding how model updates impact system efficiency, making it a continual and evolving process.

2. Behavioral Monitoring

Behavioral monitoring is equally critical, especially when it comes to predicting and detecting unintended behaviors. LLMs are notorious for producing unexpected outputs, including biased or harmful responses, due to inherent biases in the training data or shifts in input patterns. Monitoring for model drift—where the model’s performance degrades over time as new data diverges from the training data—requires proactive, continuous observation of how the LLM interacts with fresh input.

One major challenge here is detecting these subtle behavioral shifts before they cause significant issues. The complexity of LLMs makes it difficult to predict when and why certain biases or errors will emerge. Effective behavioral monitoring and bias detection rely on creating diverse test cases and monitoring for performance changes across various data inputs and contexts. This approach helps businesses identify risks early and take corrective action before these unintended behaviors affect user experiences.

3. Scalability

Scaling LLMs across global infrastructures adds another layer of complexity to monitoring. Many businesses operate LLMs in multiple regions or across cloud-based and on-premise architectures. Each environment may have different performance characteristics, requiring monitoring systems that can handle this distributed infrastructure. Scalability concerns not only arise from technical factors like network latency and resource allocation but also from the challenge of managing LLM versions and updates across a large number of deployments.

As organizations scale LLM usage, they must also ensure that their monitoring systems can scale in parallel. This involves creating frameworks that are adaptable, secure, and capable of handling significant traffic while providing consistent observability across all deployment sites. Without proper scalability, businesses risk creating bottlenecks or having incomplete monitoring coverage, which could result in performance degradation or missed opportunities to optimize resources.

4. Cost and Efficiency

Monitoring LLMs at scale can be resource-intensive, both financially and operationally. The cost of running monitoring systems for large-scale LLMs includes not only the direct cost of compute resources but also the infrastructure to support continuous logging, observability, and real-time analytics. As LLMs grow in size and complexity, businesses must balance the need for comprehensive monitoring with cost-effectiveness.

To manage costs, many organizations are turning to cloud-based observability tools or AI-driven monitoring platforms that can automate some aspects of resource management. Techniques like monitoring only key metrics instead of the entire model and implementing automated scaling for computational resources can help optimize both costs and efficiency. Additionally, using pre-built monitoring solutions offered by cloud providers can reduce operational overhead while still providing robust insights into model performance.

Techniques and Tools for Effective LLM Monitoring

As the adoption of large language models (LLMs) accelerates, the need for effective monitoring systems becomes increasingly critical. Monitoring LLMs is no longer just about tracking basic performance metrics; it now involves observability, real-time monitoring, and using AI-driven tools to ensure models perform reliably across diverse environments. Several cutting-edge techniques and tools have emerged to help organizations maintain control over their LLMs while optimizing performance and reducing risks.

Observability Platforms and Metrics Specific to LLM Operations

At the heart of LLM monitoring is observability—the ability to measure and analyze model behavior in real-time. An LLM observability tool, such as Langfuse, is designed specifically for LLM operations, allowing businesses to gain deeper insights into how their models are functioning. These platforms go beyond traditional monitoring tools by offering features like comprehensive logging, metrics collection, and tracing to diagnose performance issues and detect model drift early.

Key observability metrics for LLMs include inference latency, resource utilization, and accuracy across different datasets. By continuously tracking these variables, organizations can pinpoint inefficiencies, predict potential failures, and make timely adjustments. Moreover, these platforms enable the visualization of model behavior over time, making it easier to understand long-term performance trends and identify areas for optimization.

Features and Comparison of LLM Observability Tools

With the growing complexity and deployment of large language models (LLMs), choosing the right observability tool is crucial for effective monitoring and management. Several LLM observability tools are available, each offering unique features and capabilities. Here’s a comparison of some popular tools to help you make an informed decision.

-

Lunary: Lunary offers model-independent tracking, making it versatile for various LLMs. Its cloud-based assessment and Radar tool for categorizing LLM answers provide comprehensive insights into model performance and behavior.

-

Langsmith: Designed for Langchain users, Langsmith provides tracing, cost analysis, and analytics. These features help in understanding the cost implications and performance metrics of LLM operations, making it a valuable tool for budget-conscious organizations.

-

Portkey: Portkey offers an open-source LLM Gateway, a prompt library, and caching responses. These features enhance the efficiency and scalability of LLM deployments, making it easier to manage and optimize model performance.

-

Helicone: Helicone provides open-source LLM observability with a simple setup requiring just two code changes. It supports multiple LLM endpoints, making it a flexible choice for organizations with diverse LLM deployments.

-

TruLens: TruLens focuses on the qualitative analysis of LLM responses, offering a feedback feature and Python-only support. This tool is ideal for organizations looking to delve deep into the quality and relevance of model outputs.

-

Phoenix (by Arize): Phoenix offers a comprehensive ML observability platform that supports all ML and LLM models. Its hallucination-detecting tool is particularly useful for identifying and mitigating erroneous outputs, ensuring model reliability.

-

Traceloop OpenLLMetry: Traceloop provides an SDK for transmitting LLM observability data to multiple tools. It extracts traces from LLM providers or frameworks and publishes them in OTel format, offering flexibility and integration capabilities.

-

Datadog: Known for its robust infrastructure and application monitoring software, Datadog has expanded to include LLMs and associated tools. It provides out-of-the-box dashboards for LLM observability, making it easy to monitor and analyze model performance.

When choosing an LLM observability tool, consider the following factors:

-

Model Support: Ensure the tool supports your specific LLM model to leverage its full capabilities.

-

Ease of Setup: Evaluate how easy it is to set up and integrate the tool with your existing LLM application.

-

Feature Set: Assess whether the tool offers the features and capabilities you need for comprehensive monitoring and improvement.

-

Scalability: Check if the tool can handle large volumes of data and traffic, ensuring it can grow with your needs.

-

Cost: Consider the total cost of ownership, including licensing fees, infrastructure costs, and maintenance expenses.

By carefully evaluating these factors and comparing the features and capabilities of different LLM observability tools, organizations can select the best tool to meet their specific needs and requirements, ensuring effective monitoring and optimal performance of their LLM applications.

The Growing Importance of Real-Time Monitoring

Real-time monitoring has become indispensable in managing LLMs effectively. AI-driven monitoring systems can provide automated alerts and insights, allowing businesses to respond to issues like latency spikes or unusual output patterns immediately. In dynamic environments where LLMs are used for customer interactions or data analysis, even a slight delay or error can lead to significant disruptions.

Cloud providers like AWS and Google Cloud have integrated advanced monitoring features into their LLM offerings. For instance, AWS's LLM monitoring tools provide real-time performance tracking and anomaly detection, enabling businesses to set thresholds and receive automated alerts if models deviate from expected behavior. This proactive approach to monitoring helps ensure uninterrupted service, preventing costly downtime or errors in model output.

Additionally, AI-driven monitoring systems often incorporate machine learning algorithms to predict future issues based on historical data. These predictive analytics features allow organizations to act preemptively, scaling resources or adjusting model configurations before problems arise.

Cutting-Edge Monitoring Tools Used by Enterprises

Enterprises are already leveraging advanced tools to monitor LLMs effectively. One example is Datadog, a popular observability platform that has become a go-to for monitoring LLM health. Datadog enables businesses to monitor LLM inference performance, providing a holistic view of how models are handling real-world workloads. Through its robust metrics, logs, and tracing capabilities, Datadog helps enterprises optimize resource allocation, improve response times, and ensure that LLMs deliver accurate and timely outputs.

Another contender in the observability space is New Relic, which also offers LLM monitoring tools. By integrating with cloud platforms and offering AI-driven insights, New Relic helps businesses maintain visibility over LLM operations. The platform's ability to correlate LLM performance with business outcomes is particularly valuable for companies seeking to understand how model behavior impacts user experiences or revenue generation.

Additionally, Lakera.ai offers LLM monitoring solutions that specialize in detecting biases and ensuring model fairness. As the ethical use of AI becomes a more prominent concern, tools like Lakera.ai are helping organizations ensure that their LLMs generate unbiased, responsible outputs, particularly in sensitive applications like hiring or lending.

The Role of Observability in LLM Operations

As large language models (LLMs) grow in complexity and become integral to enterprise AI systems, ensuring their proper functioning requires more than just traditional monitoring techniques. Observability, a concept borrowed from software engineering, has become essential for understanding the internal workings of LLMs. It involves not only tracking basic performance metrics but also capturing a more in-depth view of how these models behave in real time, across multiple dimensions. By collecting and analyzing key telemetry data, observability enables businesses to troubleshoot issues, optimize performance, and ensure that LLMs operate as expected.

Defining Observability for LLMs

In the context of LLMs, observability refers to the ability to measure, monitor, and interpret various signals emitted by these models during their operations. This could include data on inference latency, throughput, model accuracy, and resource consumption. Unlike traditional monitoring, which tends to focus on predefined metrics, observability seeks to give a comprehensive, real-time view of what is happening inside the system. Platforms such as Langfuse and Signoz are emerging as key players, offering observability tools specifically designed to handle the intricacies of LLMs.

The core principles of observability revolve around three types of telemetry data:

-

Logs: Capturing real-time events and changes in the model's behavior, helping identify irregularities or unexpected outputs.

-

Metrics: Continuous monitoring of quantitative measures such as model response time, throughput, or error rates.

-

Traces: Visualizing the end-to-end journey of an LLM's inference request, allowing for a deeper understanding of where bottlenecks or inefficiencies occur.

Why Observability is Essential for LLMs

Observability is crucial in LLM operations because it allows for real-time tracking and troubleshooting of issues that are often difficult to predict. Large language models are highly complex, with their behavior depending heavily on the quality of input data, model configuration, and the infrastructure on which they run. By monitoring key telemetry data, organizations can gain insights into how models behave under different conditions and make necessary adjustments before issues escalate into costly failures.

For instance, observability allows for quick detection of model drift, where an LLM's performance degrades over time as it encounters data distributions different from its training set. Without real-time tracking, model drift could go unnoticed until the LLM starts delivering consistently poor results. Observability enables businesses to preemptively address this drift by retraining or recalibrating the model.

Furthermore, observability helps organizations manage their LLMs' resource utilization. Since LLMs are computationally expensive, especially during inference, observability platforms track how efficiently resources like GPUs or cloud instances are being used. This ensures that businesses can maintain high performance without overspending on infrastructure.

Log Analysis, Tracing, and Metrics Collection: The Pillars of LLM Observability

Log analysis, tracing, and metrics collection form the backbone of any LLM observability strategy. Log analysis allows organizations to capture every action the model takes, offering insights into behavior patterns, error rates, or changes in performance. For example, logs can reveal if an LLM starts generating biased responses due to a shift in the input data. This granular visibility is key to detecting issues early and maintaining the model's integrity.

Tracing goes beyond simple logging by allowing businesses to map out an entire request flow through the LLM system. By tracing the steps of each request, from the initial input to the final output, enterprises can pinpoint where delays, errors, or resource bottlenecks occur. This is especially critical in distributed systems where LLMs interact with multiple components, such as APIs, databases, or user interfaces. Tracing offers a bird's-eye view of the model's performance across these systems.

Finally, metrics collection serves as the quantitative pulse of LLM performance. Metrics like model latency, accuracy, and throughput are continuously collected to give businesses a high-level view of their model's health. Real-time dashboards, powered by platforms like Datadog and New Relic, allow data teams to monitor these metrics and set up automated alerts when thresholds are exceeded. This helps prevent sudden drops in performance and ensures that LLMs are always running at optimal efficiency.

Practices for Monitoring LLMs

As businesses increasingly rely on Large Language Models (LLMs) for various applications, the importance of robust monitoring systems becomes more critical. Effective monitoring ensures that these models continue to perform optimally, mitigate risks, and remain aligned with business goals. Implementing best practices for monitoring LLMs involves setting up comprehensive alerts, conducting regular model evaluations, tracking user feedback, and safeguarding data privacy. In this section, we explore the best practices that organizations should adopt to ensure the smooth operation of their LLM systems.

1. Setting Up Alerts and Automated Monitoring

One of the first steps in monitoring LLMs is configuring alerts that notify teams when key performance indicators (KPIs) or system behaviors deviate from expected parameters. These alerts help identify issues such as increased latency, errors in inference output, or resource overutilization before they escalate into larger problems. Platforms like Datadog and Langfuse allow businesses to set up real-time alerts based on metrics such as response time, throughput, and memory usage.

Automated monitoring is critical in ensuring that LLMs maintain optimal performance. AI-driven monitoring tools can autonomously track the health of models, detecting patterns that might indicate performance degradation. With real-time alerts, teams can react quickly to unforeseen issues, such as model drift or sudden spikes in resource consumption. By automating much of the monitoring process, organizations can improve system reliability and reduce operational overhead.

2. Regular Model Evaluation

A key best practice for LLM monitoring is regular model evaluation. Since LLMs often deal with changing data inputs and dynamic environments, continuous evaluation ensures that the models perform as intended over time. Regular evaluations allow businesses to assess how well their models are adapting to new information and whether retraining is necessary to improve accuracy or reduce bias.

Evaluations can include performance metrics like accuracy, precision, and recall, but they should also assess qualitative outputs. For instance, monitoring whether the model is generating outputs consistent with brand voice or ethical guidelines is essential in areas like content creation or customer service. As suggested by IBM's guidelines on model drift, continuous testing against diverse data sets helps identify whether an LLM's performance is drifting due to changes in input data distributions, ensuring it remains aligned with expected outcomes.

3. Tracking User Feedback

User feedback is a crucial yet often underutilized aspect of LLM monitoring. By actively tracking how users interact with an LLM, businesses can identify areas for improvement or detect any unintended consequences, such as biased responses or unsatisfactory outputs. Integrating feedback mechanisms allows teams to adjust the model's behavior based on real-world interactions and improve the overall user experience.

For example, platforms like Lakera.ai provide tools to assess and mitigate bias in LLMs by incorporating feedback loops that alert teams to undesirable model behaviors. This feedback not only helps improve the model's responses but also reduces the risk of reputational damage due to biased or harmful outputs.

4. Ensuring Data Privacy and Security

Given that LLMs often handle sensitive data, ensuring robust data privacy and security measures is paramount. As highlighted by Datadog and Langfuse, organizations must develop strategies to protect sensitive data and comply with regulations like GDPR or CCPA when processing personal information through LLMs. Proper encryption, access control, and anonymization techniques are essential to safeguard data used in LLM training and inference.

To further ensure privacy, businesses should adopt privacy-by-design practices, embedding security protocols into their LLM architecture. This includes frequent audits of the model’s handling of sensitive data and deploying monitoring tools that flag any unauthorized data access or processing anomalies. Langfuse emphasizes the importance of data traceability in LLM observability, helping organizations track how data is used and ensuring that privacy protocols are respected throughout the model’s lifecycle.

5. Proactive Risk Management: Bias, Performance Degradation, and Security Vulnerabilities

Proactive identification of risks such as biased behavior, performance degradation, or security vulnerabilities is vital for maintaining the integrity of LLMs. Monitoring systems must be configured to detect bias by continuously analyzing model outputs against ethical guidelines or fairness metrics. Lakera.ai offers advanced bias detection tools that help organizations manage these risks, ensuring that LLMs provide fair and unbiased responses, particularly in critical applications like recruitment or financial services.

Monitoring tools should also be capable of detecting performance degradation. By evaluating key performance metrics over time, such as response accuracy or latency, businesses can identify when LLMs are underperforming or require retraining. Proactive measures, like retraining models based on fresh data, can mitigate the risk of performance degradation due to model drift or evolving data patterns.

Lastly, ensuring the security of LLMs is critical in mitigating vulnerabilities that could expose sensitive data or compromise business operations. This involves regularly updating security protocols, encrypting sensitive data, and applying real-time monitoring to detect any potential breaches or unauthorized access. Platforms like Langfuse and Signoz offer comprehensive security monitoring features tailored for LLM deployments, enabling businesses to ensure that their models remain secure in both cloud and on-premises environments.

Future Trends in LLM Monitoring

As Large Language Models (LLMs) evolve, so too must the monitoring techniques and tools used to manage them. These models are becoming more complex, capable, and integrated into various business functions, from customer support to advanced data analytics. The future of LLM monitoring will see significant advancements in automation, the emergence of self-healing systems, and a growing focus on ethical AI. This section explores these upcoming trends and how businesses can prepare for the next generation of LLMs.

Increased Automation in Monitoring

One of the most anticipated trends in LLM monitoring is the increased role of automation. As LLMs become more powerful, manual monitoring becomes less feasible due to the sheer volume of data these models process and generate. Automated monitoring solutions will leverage AI and machine learning to continuously observe model behavior, detect anomalies, and trigger alerts without human intervention.

Companies like Google are already experimenting with more advanced AI models integrated into everyday devices, hinting at a future where monitoring systems are embedded into AI infrastructure at a granular level. These AI-driven monitoring platforms will not only identify issues faster but also optimize model performance by automatically adjusting resource allocation or tuning parameters. This shift will reduce the operational burden on data science teams and allow them to focus on higher-level tasks like model refinement and strategic development.

Development of Self-Healing Models

A key advancement in the future of LLMs is the concept of self-healing models. Self-healing systems detect and fix issues autonomously, reducing downtime and improving model resilience. Similar to how self-healing technology is being applied to hardware, such as self-healing PCs that can recover from certain malfunctions, AI systems will also become self-correcting.

In an LLM context, self-healing models will automatically recognize when they are underperforming due to factors like model drift or biased outputs. These systems could automatically retrain themselves or adjust internal parameters to maintain accuracy and efficiency. While this technology is still in its early stages, its development will be a game-changer for industries that rely heavily on real-time AI outputs, such as financial services and healthcare.

Ethical and AI Governance Monitoring

As AI models become more integrated into business and government, the focus on ethical AI monitoring is intensifying. Concerns around bias, fairness, and accountability are driving the need for transparent and responsible AI systems. Future monitoring tools will not only track performance metrics but also assess the ethical implications of LLM behavior.

Governments and organizations are already taking steps to enforce AI governance policies. For example, the U.S. government's recent AI governance initiative emphasizes the importance of monitoring AI systems for ethical compliance, particularly in federal applications. This trend is expected to extend to private enterprises, pushing them to adopt monitoring frameworks that ensure their LLMs align with ethical standards, reduce bias, and promote fairness.

Preparing for the Next Generation of LLMs

As LLMs continue to advance, organizations need to be proactive in preparing for the challenges and opportunities that come with them. The next generation of LLMs will be larger, faster, and more capable of handling diverse tasks. To keep pace, businesses should invest in the following strategies:

-

Adopt AI-Driven Monitoring Tools: To manage the complexity of future LLMs, organizations should adopt AI-driven monitoring tools that can automatically detect issues, optimize performance, and ensure data privacy. Platforms like Datadog and Langfuse are leading the way in providing comprehensive observability tools designed for LLM operations.

-

Prioritize Ethical AI Monitoring: With increased scrutiny on the ethical implications of AI, businesses must incorporate ethical monitoring into their LLM workflows. This includes deploying tools that assess model fairness, detect bias, and ensure compliance with ethical standards. Solutions like Lakera.ai offer advanced capabilities for monitoring bias and ensuring ethical AI practices.

-

Prepare for Scalability: As LLMs grow in size and capability, scalability will become a critical factor. Organizations should ensure their monitoring systems can handle distributed infrastructures, including cloud-based and on-premise deployments. Tools that offer real-time monitoring and resource optimization will be essential for managing the next wave of LLMs.

-

Invest in Self-Healing AI Systems: While still in development, self-healing AI systems represent the future of autonomous model management. Businesses should start exploring early-stage solutions and experiment with systems that can self-correct without human intervention. This investment will future-proof AI infrastructure and enhance operational efficiency.

Additionally, retrieval augmented generation (RAG) can enhance LLMs by incorporating relevant external data sources into the prompts, improving model performance through contextually rich responses.

The Importance of Monitoring in the Age of LLMs

As Large Language Models (LLMs) become essential to modern business operations, robust monitoring systems are no longer optional—they are critical for ensuring these models deliver consistent value while minimizing risks. LLMs are incredibly powerful, but their complexity requires vigilant oversight. From performance tracking to ethical monitoring, businesses need to implement comprehensive monitoring solutions to maintain model accuracy, security, and operational efficiency.

One of the most significant business advantages of proactive monitoring is improved performance. Monitoring allows for real-time analysis of key metrics, enabling companies to detect issues like latency spikes, resource overuse, or degraded accuracy before they impact user experience. AI-driven monitoring tools provide businesses with the ability to automate these processes, ensuring that LLMs continuously perform at their best without manual intervention. This proactive approach leads to smoother operations and enhances the overall value that LLMs can offer.

Another critical benefit is risk mitigation. By incorporating continuous behavioral monitoring, businesses can reduce the chances of harmful or biased outputs, safeguarding both brand reputation and regulatory compliance. As LLMs process vast amounts of data, monitoring systems that track for ethical breaches or security vulnerabilities become essential for avoiding costly mistakes or breaches. This is especially relevant in highly regulated industries such as finance and healthcare, where data security and ethical AI are paramount.

Additionally, effective LLM monitoring drives cost efficiency. Large models require significant computational resources, and without proper monitoring, companies risk overspending on infrastructure. Observability platforms and monitoring tools optimize resource utilization, ensuring that businesses only use what is necessary while maximizing the return on their AI investments. This optimization not only cuts costs but also contributes to a more sustainable and scalable AI strategy.

To ensure long-term success and innovation, businesses must invest in the right monitoring tools and strategies. This includes selecting observability platforms that align with their specific use cases, training teams to interpret and act on monitoring data, and adopting AI-driven solutions that automate and enhance the monitoring process. By doing so, companies will be well-positioned to leverage the full potential of LLMs while minimizing the risks associated with their complexity.

In conclusion, LLM monitoring is vital for the future of AI-powered business operations. By embracing proactive, automated, and ethical monitoring practices, organizations can improve performance, reduce risks, and drive cost efficiency, all while preparing for the advancements in LLM technology that lie ahead. Investing in comprehensive monitoring systems today is the key to unlocking the full potential of LLMs and ensuring their role in driving innovation and success in the years to come.

References

- Arize: Large Language Model Monitoring Observability

- Fiddler: LLM Monitoring: The Key to Successful LLM Deployments

- Langfuse: Security Overview

- Datadog: LLM Observability

- Lakera: LLM Security

- Signoz: LLM Observability

- Langfuse: Tracing

- AWS: Techniques and Approaches for Monitoring Large Language Models on AWS

- Giselle: How AI and Prompt Engineering Are Revolutionizing Software Testing in 2024

- Giselle: AI in Content Creation: Innovations, Challenges, and What's Next

- Giselle: Generative AI: Unlocking the Future of Business Productivity and Economic Growth

Please Note: This content was created with AI assistance. While we strive for accuracy, the information provided may not always be current or complete. We periodically update our articles, but recent developments may not be reflected immediately. This material is intended for general informational purposes and should not be considered as professional advice. We do not assume liability for any inaccuracies or omissions. For critical matters, please consult authoritative sources or relevant experts. We appreciate your understanding.